Jing (Daisy) Dai

M.S. Candidate at SJTU | Incoming Ph.D. Student at Virginia Tech TEA Lab

I'm fascinated by how robots can learn manipulation from human demonstrations without reducing skill to trajectory replay. My research tackles this from both sides: I build teleoperation hardware that captures high-DOF human manipulation, and I develop robot learning methods that recover task structure for transfer across different hands. Starting in Fall 2026, I will join Simon Stepputtis's TEA Lab at Virginia Tech as an incoming Ph.D. student in Mechanical Engineering, where I plan to continue working on dexterous manipulation and embodied intelligence.

Outside the lab, I explore questions of consciousness through science fiction and find clarity and peace from long-distance running.

Research & Projects

The bionic peacock I built in 2022 required hand-engineering each motion pattern—feasible for one demonstration, impossible for the thousands of manipulation tasks humans perform daily. This limitation shaped my research direction.

Building teleoperation systems deepened this insight: you record finger trajectories but miss what's happening to the object being manipulated. IntuitCap started as research hardware and became a commercial product at DexRobot Inc., while my RL algorithms infer object dynamics and task structure from human demonstrations. The projects below show how hardware constraints and algorithmic insights inform each other.

Task-Centric Reinforcement Learning for High-DOF Dexterous Hands

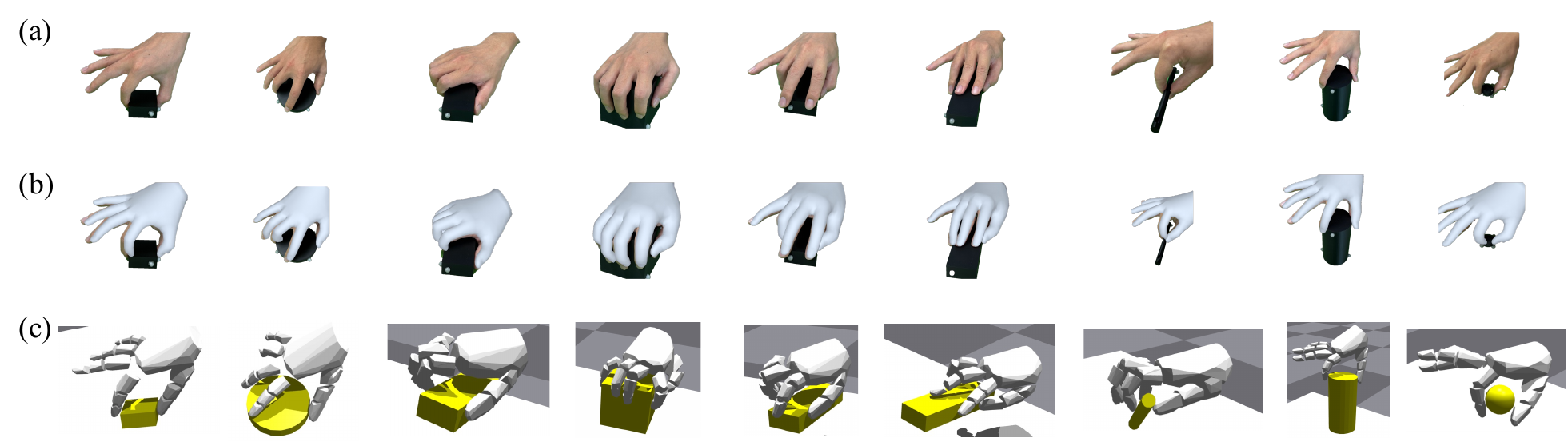

Real Human Demonstrations → Simulated MANO Hand

For DexCanvas, we captured 70 hours of human demonstrations across 21 manipulation types from the Cutkosky taxonomy. Motion capture provides geometry but misses the contact forces that produce manipulation—an indispensable part of understanding what's happening during the task. Our RL-based force extraction trains policies to control an actuated MANO hand in simulation, reproducing observed object motion.

The physics simulator measures contact forces impossible to obtain from mocap alone. This expands 70 hours of geometric data into 7,000 hours of physically consistent manipulation data with complete force annotations—enabling scalable learning for diverse manipulation tasks.

Cross-Morphology Transfer: Human Hands → Robot Hands

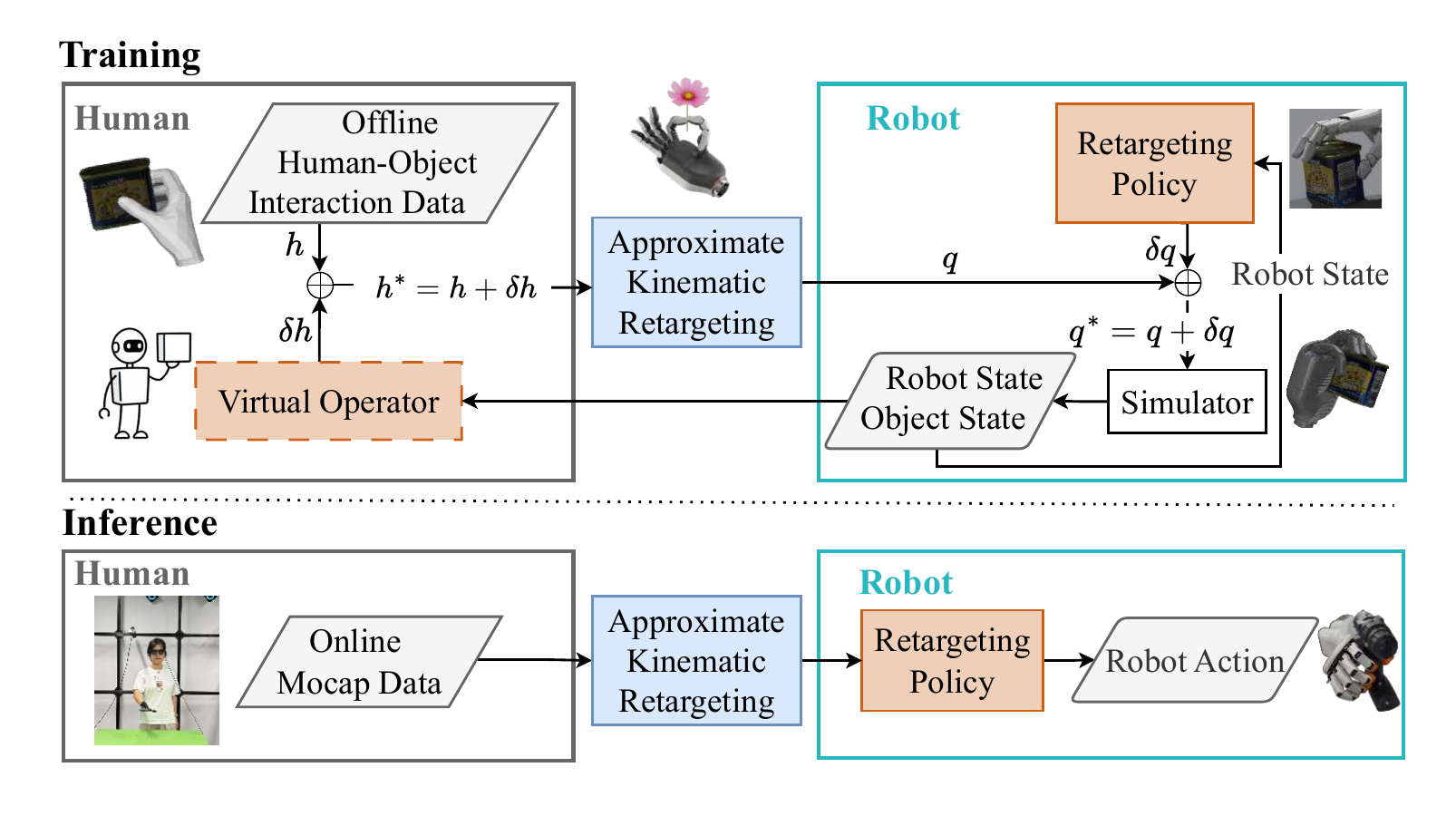

HOVER (IROS 2025 Workshop; full paper in preparation for RSS 2026) solves the retargeting challenge through a virtual operator framework. Offline demonstrations lack the closed-loop control humans provide during teleoperation. HOVER's virtual operator simulates this adaptive behavior during training.

It sees rich context and adjusts human trajectories, while the retargeting policy sees only joint angles—forcing generalizable joint-to-joint mappings that work across tasks. This achieves both task fidelity and anthropomorphism, where existing methods trade off one for the other. The virtual operator absorbs dataset artifacts while pure joint-level retargeting ensures generalization.

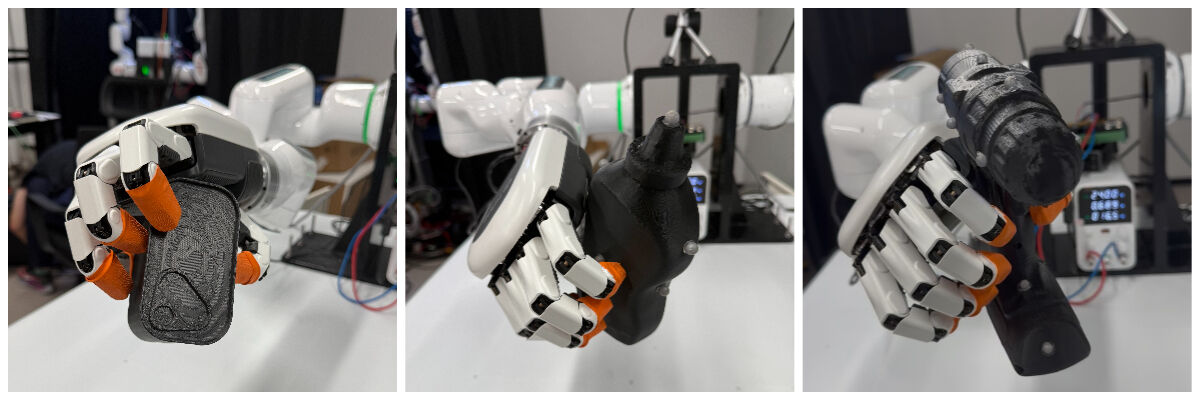

Sim2Real: Deployment on Real Dexterous Hands

Deployed on the 19-DOF DexHand021, HOVER demonstrates power grasps, enveloping grasps, and tool-handle grasps. The system achieves 30% efficiency improvement over baseline methods in real-world teleoperation scenarios.

Our real2sim2real pipeline successfully transfers human manipulation skills to robot hands with different morphologies, demonstrating the practical viability of our approach for real-world dexterous manipulation tasks.

IntuitCap: 60-DOF Motion Capture for Dexterous Manipulation

Overview

I developed IntuitCap, a 60-DOF exoskeleton-glove system with magnetic encoders to bypass optical occlusion constraints, enabling collection of hundreds of human manipulation demonstrations across various tasks.

Key Features

- 60 magnetic encoders capturing upper-body motion

- Enable mapping to telexistence (Unity Avatar) and teleoperation (robot control)

This system became a commercial product at DexRobot Inc., deployed for daily demonstration collection. The latest version is DexCap, which is lighter than what's described in publications.

Bionic Robotic Peacock

A voice-controlled robot peacock that dances, spreads its tail, and struts on command. The challenge was coordinating 11 different motors to create natural, lifelike movements that captivate audiences.

The tail uses servo motors for precise feather movements, while the neck and wings employ brushless motors for smooth motion. After several iterations on the walking gait, we achieved a confident peacock strut that brings smiles to exhibition visitors.

About Me

Education

Incoming Ph.D. in Mechanical Engineering

Virginia Tech

Starting Aug. 2026

Advisor: Simon Stepputtis | TEA Lab (Thinking Embodied Agents)

M.S. in Mechanical Engineering

Shanghai Jiao Tong University

Sep. 2023 - June 2026 (Expected)

Advisor: Weixin Yan - Associate Professor of ME, SJTU

B.E. in Mechanical Design, Manufacturing and Automation

Hunan University

Sep. 2019 - Jun. 2023

Beyond the Lab

Outside research, I'm drawn to science fiction that explores intelligence and identity—in books like Flowers for Algernon and Ted Chiang's Story of Your Life, and films like Blade Runner and Ghost in the Shell. They grapple with questions that haunt me: what constitutes consciousness? Where's the boundary between human and machine? But what draws me most is how these stories make the familiar strange—forcing me to examine what we take for granted about being human.

I run long distances—5K to half-marathons. When I'm running well, every muscle responds precisely, and I feel both light and completely focused. These moments make me appreciate the delicate, exquisite design of the human body—how joints articulate, how force transfers through bone and tissue, how everything coordinates without conscious thought. It's one thing to study biomechanics for robot design; it's another to feel that engineering elegance from within.